Xcapit Labs / Privacy

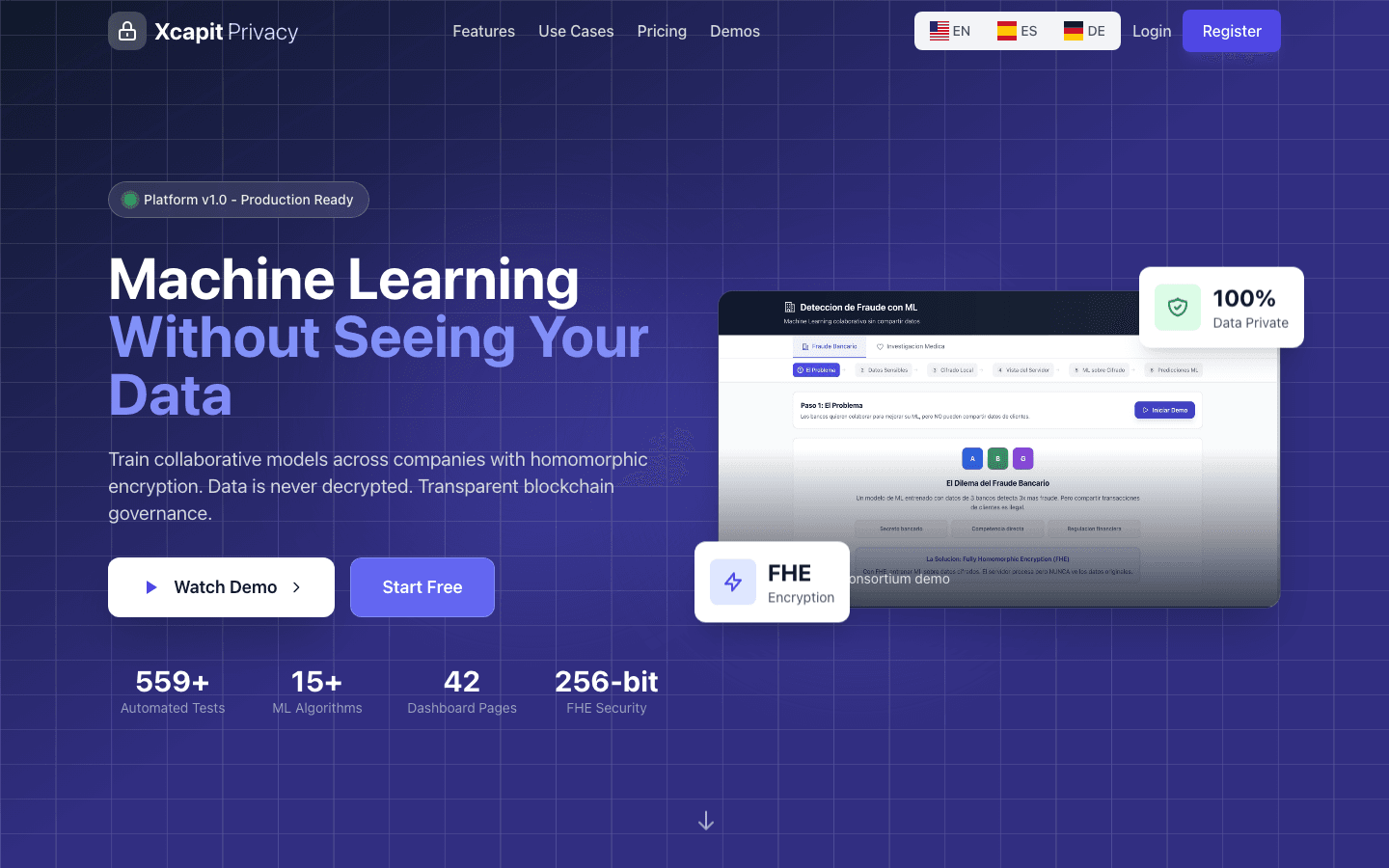

Machine Learning on Data That Stays Encrypted

Train AI models on sensitive data without ever decrypting it. Fully Homomorphic Encryption lets organizations collaborate on fraud detection, medical research, and risk analysis — while keeping their data completely private.

Capabilities

What Privacy Does

Fully Homomorphic Encryption

Data is encrypted using the CKKS scheme via TenSEAL. Computations happen directly on ciphertext — the platform never sees plaintext data, not even during model training.

Collaborative ML Training

Multiple organizations contribute encrypted data to shared models. Each party's data remains private while the model learns from the combined dataset.

Zero Data Exposure

Not even the platform operator can access the underlying data. 256-bit encryption ensures mathematical guarantees of privacy throughout the entire pipeline.

Blockchain Governance

Arbitrum smart contracts manage access control, audit trails, and model governance. Every computation is logged immutably for regulatory compliance.

Automated Testing Suite

559+ automated tests verify encryption integrity, model accuracy on encrypted data, and security boundaries. Continuous testing ensures privacy guarantees hold.

Privacy-by-Design Architecture

Built from the ground up with privacy as the core constraint. Every component is designed to minimize data exposure and maximize cryptographic guarantees.

Validation

Proven Guarantees

559+ Automated Tests

Comprehensive test suite covering encryption correctness, model accuracy on ciphertext, boundary conditions, and security edge cases.

15+ ML Algorithms

Support for classification, regression, clustering, and anomaly detection algorithms — all operating on fully encrypted data without accuracy loss.

256-bit FHE Security

CKKS encryption scheme with 256-bit security level. Mathematically proven privacy that exceeds banking and healthcare regulatory requirements.

3-Page Architecture

Clean separation: Data Owner page for encryption and upload, ML Engineer page for model training, and Admin page for governance and audit.

Our Journey

From Research to Production

Privacy started as a research project exploring the intersection of homomorphic encryption and practical machine learning.

Research Phase

Explored TenSEAL and CKKS schemes for practical ML computation on encrypted data. Validated that FHE could support real-world algorithms with acceptable performance.

Platform Architecture

Designed the three-page architecture separating data owners, ML engineers, and administrators. Integrated Arbitrum for immutable governance and audit trails.

Algorithm Suite & Testing

Expanded to 15+ ML algorithms with 559+ automated tests. Proved that encrypted training produces statistically equivalent results to plaintext training.

Enterprise Readiness

Production hardening, compliance documentation, and enterprise integration capabilities. Privacy is ready for organizations that need to collaborate on AI without sharing data.

Cryptographic Foundations

Privacy combines cutting-edge homomorphic encryption with production-grade infrastructure.

CKKS scheme for approximate arithmetic on encrypted floating-point numbers. Optimized for ML operations like matrix multiplication and polynomial evaluation.

Smart contracts for access control, computation audit trails, and model governance. Immutable record of every encrypted operation.

High-performance API for encrypted data operations. React dashboard for data owners, ML engineers, and administrators.

Roadmap

Vision 2026

Privacy is evolving from a platform into a standard for privacy-preserving AI collaboration.

Use Cases

Who Uses Privacy

Healthcare Research

Hospitals and research institutions train diagnostic models on combined patient data without sharing medical records. Privacy-compliant AI for better outcomes.

Financial Services

Banks and fintechs collaborate on fraud detection and credit scoring models without exposing customer transaction data to competitors.

Government & Public Sector

Public agencies run analytics on sensitive citizen data — census, tax, health — while maintaining constitutional privacy guarantees.

FAQ

Frequently Asked Questions

What is Fully Homomorphic Encryption (FHE)?

FHE allows computations to be performed directly on encrypted data without decrypting it first. The results, when decrypted, are identical to running the same computations on plaintext. This means the platform never needs to see your actual data.

Does encryption affect model accuracy?

The CKKS scheme used by Privacy supports approximate arithmetic, which introduces minimal noise. Our 559+ tests verify that encrypted training produces statistically equivalent results to plaintext training across all supported algorithms.

How does Privacy comply with data regulations?

Since data is never decrypted during processing, Privacy provides the strongest possible privacy guarantee. This exceeds requirements for GDPR, HIPAA, and most data protection regulations because the platform operator cannot access the data even if compelled.

Can we use our own ML algorithms?

Privacy supports 15+ built-in algorithms and provides an SDK for custom algorithm integration. Any algorithm that can be expressed as polynomial operations is compatible with FHE computation.

Ready to unlock AI on private data?

Whether you're in healthcare, finance, or government — Privacy lets you train AI models without compromising data confidentiality.